Artificial intelligence is reshaping how people discover and consume information online. Instead of browsing multiple websites through traditional search engines, users increasingly rely on AI assistants to summarize topics, compare products, explain concepts, and recommend solutions. This shift introduces a new layer between users and websites: AI systems that interpret and present information before a user ever visits the source.

For developers, marketers, and SEO professionals, this raises a crucial question: how can you ensure AI systems understand your content correctly and represent it accurately?

One emerging concept attempting to address this challenge is llms.txt. This proposed file format is designed to guide Large Language Models (LLMs) toward the most important and context-rich content on a website. While still experimental, it reflects a broader effort to adapt web infrastructure for an AI-driven discovery ecosystem.

This article explains what llms.txt is, why it was proposed, how it works, who is experimenting with it, and whether it deserves your attention.

The Shift from Search Engines to AI-Driven Answers

For decades, digital visibility revolved around search engine optimization. Websites competed for rankings by optimizing keywords, building backlinks, and improving technical performance. Users searched, scanned results, clicked links, and explored websites for answers.

Today, discovery behavior is evolving. Users increasingly ask AI assistants to deliver immediate answers instead of presenting them with a list of links. These tools synthesize information from multiple sources, summarize insights, and often eliminate the need to visit individual websites.

This evolution has introduced new concepts such as Answer Engine Optimization (AEO) and AI discoverability. In this environment, content clarity, semantic structure, and contextual accuracy matter more than keyword density.

AI systems do not merely index content; they interpret it. If your content lacks structure or clarity, it may be misunderstood, misrepresented, or excluded from AI-generated responses. This emerging reality has motivated the exploration of tools like llms.txt.

What Is llms.txt?

llms.txt is a proposed standard designed to help AI systems locate and interpret the most important content on a website. Instead of listing every page, it acts as a curated guide highlighting authoritative and context-rich resources.

A useful way to understand its role is by comparing it with existing web files.

| File | Primary Role | How It Helps Machines |

|---|---|---|

| robots.txt | Controls crawler access | tells bots what they can or cannot crawl |

| sitemap.xml | Lists site URLs | helps discovery and indexing |

| llms.txt | Curates key resources | guides AI toward high-value content |

Unlike a sitemap, which is comprehensive, llms.txt is selective. Its goal is to reduce ambiguity by directing AI systems toward pages that provide definitive and reliable information.

The primary objective is to improve accuracy and reduce hallucinations by guiding AI models toward trustworthy sources.

Why llms.txt Was Proposed

Large Language Models process enormous volumes of web content. While powerful, they face several challenges when interpreting websites.

One major challenge is content ambiguity. Websites often include promotional copy, duplicated pages, outdated information, and inconsistent messaging. AI systems may struggle to determine which content is authoritative.

Another issue is inconsistent structure. Many websites lack clear documentation hierarchies or logical content organization. Without clear structure, AI systems must infer relationships between pages.

Context gaps present an additional difficulty. AI models attempt to identify definitive sources, but not all websites clearly distinguish between primary documentation and marketing material.

When reliable context is missing, models may generate inaccurate conclusions. This is commonly described as hallucination.

llms.txt attempts to mitigate these issues by providing a curated roadmap to trustworthy content.

How llms.txt Works

The llms.txt file is a simple Markdown document hosted at the root of a domain, such as:

yourdomain.com/llms.txt

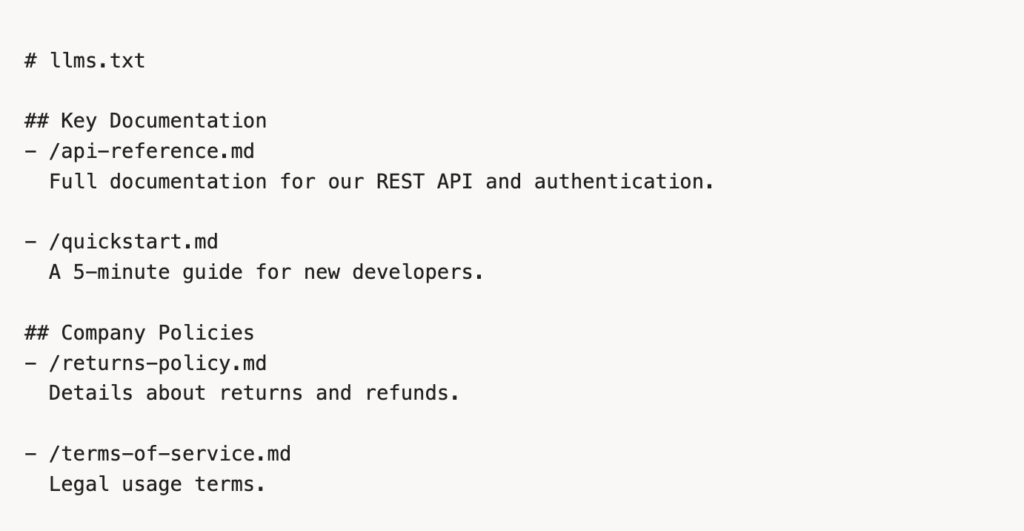

The file uses headings and bullet points to group key resources. Each entry can include a short description to provide context.

Example Structure

This structure is both human-readable and machine-friendly. Markdown formatting allows AI systems to easily parse headings, lists, and descriptions.

Unlike automated discovery methods, this file intentionally highlights high-value content.

What Content Should Be Included?

The effectiveness of llms.txt depends on selecting content that provides clarity and authority.

Technical documentation is among the most valuable inclusions. API references, integration guides, and developer documentation provide structured, factual information that AI systems can interpret reliably.

Policy and customer information also provide clear context. Pages such as return policies, pricing details, warranties, and terms of service offer definitive answers to common user questions.

Product and knowledge resources are equally valuable. Technical specifications, FAQs, and knowledge base articles provide structured insight into offerings and functionality.

Educational resources such as tutorials, implementation guides, and technical explainers can further enhance contextual understanding.

Promotional landing pages, opinion-based blog posts, and outdated resources should generally be avoided. The objective is to maximize signal while minimizing noise.

How AI Systems Interpret Website Content

Understanding how AI systems process web content clarifies where llms.txt fits into the ecosystem.

Website Content

↓

Crawler Access (robots.txt rules)

↓

Content Extraction & Parsing

↓

Semantic & Structured Data Analysis

↓

Knowledge Synthesis

↓

AI Response Generation

llms.txt has been proposed as an additional guidance layer that may help prioritize authoritative sources within this pipeline.

Who Is Using llms.txt?

Adoption remains limited but notable among developer-focused organizations.

| Organization | How They Use It | Why It Fits |

|---|---|---|

| Anthropic | Maps developer documentation | API clarity & technical depth |

| Cloudflare | Lists performance & security resources | documentation-heavy platform |

| Mintlify | Structures developer docs | documentation-first product |

| Tinybird | Organizes developer tooling resources | technical audience |

These organizations rely heavily on clear technical documentation, making them ideal early adopters. However, widespread adoption has not yet occurred.

Do AI Crawlers Actually Use llms.txt?

At present, no major AI provider officially supports llms.txt.

| Provider | Current Behavior |

|---|---|

| OpenAI (GPTBot) | Honors robots.txt but ignores llms.txt |

| Google (Gemini) | Uses Google-Extended controls via robots.txt |

| Anthropic (Claude) | Publishes its own file but does not prioritize others |

AI systems typically crawl and interpret content directly rather than relying on owner-provided summaries.

Search representatives have compared llms.txt to the deprecated meta keywords tag — a declaration that may not be treated as a trusted signal.

Comparing llms.txt with Existing Standards

Understanding its potential role requires comparison with existing technologies.

| Standard | Purpose | Importance for AI Understanding |

|---|---|---|

| robots.txt | crawler permissions | critical |

| sitemap.xml | URL discovery | important |

| schema markup | structured meaning | very high |

| semantic HTML | content clarity | essential |

| llms.txt | curated guidance | experimental |

Structured, high-quality content remains more valuable than any emerging file format.

Potential Benefits of llms.txt

Despite limited adoption, llms.txt offers several advantages.

| Benefit | Explanation |

|---|---|

| Low effort | can be created in minutes |

| Future-proofing | prepares site for potential adoption |

| Better organization | helps identify authoritative resources |

| Developer-friendly | Markdown integrates with documentation workflows |

| Zero risk | does not affect rankings or crawling |

Limitations and Concerns

There are also meaningful limitations to consider.

| Concern | Details |

|---|---|

| No proven ROI | no evidence of improved AI visibility |

| Lack of official support | not endorsed by major AI providers |

| Maintenance overhead | requires updates if URLs change |

| Possible redundancy | structured sites may not need it |

Where llms.txt May Provide the Most Value

Certain sectors may benefit more due to their documentation and structured content needs.

| Industry | Potential Use Case |

|---|---|

| Developer platforms | API documentation & SDK guides |

| E-commerce | return policies & product specs |

| Financial & legal services | compliance & policy clarity |

| Knowledge platforms | documentation-heavy resources |

| AI & SaaS startups | machine-readable content strategies |

The Bigger Picture: AI Visibility Depends on Structure

Regardless of whether llms.txt becomes a standard, one principle remains constant: AI systems prioritize clarity, structure, and authority.

Improving AI discoverability depends on:

- clear semantic headings

- structured documentation

- schema markup

- FAQ sections

- consistent terminology

- authoritative content

- accurate metadata

If content lacks clarity, no file format can compensate.

Should You Add llms.txt to Your Website?

| Scenario | Recommendation |

|---|---|

| strong documentation & technical audience | consider adding |

| interest in early adoption | worthwhile experiment |

| expecting immediate ROI | not necessary |

| limited structured content | low priority |

Declared Intent vs Observed Reality

llms.txt reflects a broader desire among website owners to influence how AI systems interpret their content.

However, AI systems prioritize observed reality over declared intent. They evaluate clarity, authority, structure, and trustworthiness rather than relying solely on declarations.

This means content quality remains the dominant factor in AI visibility.

Adoption Outlook

The future of llms.txt depends on whether major AI providers adopt standardized guidance formats.

| Possible Outcome | Likelihood | Impact |

|---|---|---|

| official AI standard | moderate | high |

| niche developer adoption | high | moderate |

| remains experimental | high | limited |

| replaced by better standards | moderate | transitional |

Final Verdict

At present, llms.txt occupies an experimental space.

- It is not essential.

- It is not harmful.

- It is not a ranking factor.

- It has no measurable ROI today.

However, it represents an early attempt to shape AI-driven discovery.

Practical Takeaway

- Focus first on structured, high-quality content.

- Ensure documentation and policies are clear.

- Use schema markup and semantic HTML.

- Monitor AI discovery trends.

- Consider llms.txt as a low-cost experiment.

If implementation takes only a few minutes, it may be worth testing. Just do not expect immediate impact.

Create Your llms.txt File

Generate a properly formatted llms.txt file in minutes using our free tool:

https://www.llmaudit.ai/llms-txt-generator

Useful References

AI Crawling & Indexing

- https://platform.openai.com/docs/gptbot

- https://developers.google.com/search/docs/crawling-indexing/google-extended

Structured Data & Standards

- https://schema.org

- https://developers.google.com/search/docs/appearance/structured-data/intro-structured-data

Documentation & Developer Resources

Conclusion

The transition from search engines to AI-driven answers is reshaping digital visibility. Organizations are no longer competing only for rankings; they are competing for inclusion in AI-generated responses.

llms.txt represents an early attempt to adapt web infrastructure for this new environment. While experimental and currently unsupported by major AI providers, it highlights a critical shift toward structured, machine-understandable knowledge.

Whether it becomes a widely adopted standard or fades away, the lesson it reinforces is clear:

In the AI era, success belongs to those who make their knowledge clear, structured, and contextually reliable.