Introduction:

AI search platforms do not rank pages the same way traditional search engines do. Instead, they evaluate content quality before using it in generated answers.

If your content fails quality evaluation, it may never be cited — regardless of rankings.

This is where LLMO content score becomes critical. Understanding how it works & helps you create content that AI systems trust, retrieve, and cite in responses.

As AI-powered search grows, optimizing for LLMO content score is essential for visibility in AI-generated answers.

- Introduction

- What is LLMO Content Scoring?

- How AI Systems Use LLMO Content Scoring

- Why LLMO Content Scoring Matters for AI Search Visibility

- Core Factors in LLMO Content Scoring

- Semantic Relevance

- Topical Authority & Expertise Signals

- Trust & Credibility Signals

- Content Structure & Clarity

- Answer Utility & Practical Value

- Simplified LLMO Content Scoring Model

- Traditional SEO Ranking vs LLMO Content Scoring

- Why High Rankings Don’t Guarantee AI Citations

- Signs Your Content Has Low LLMO Content Scorring

- How to Improve LLMO Content Scoring

- LLMO Optimization Checklist

- Role of an LLM Audit in Improving LLMO Content Score

- How an LLM Audit Improves Content Selection Chances

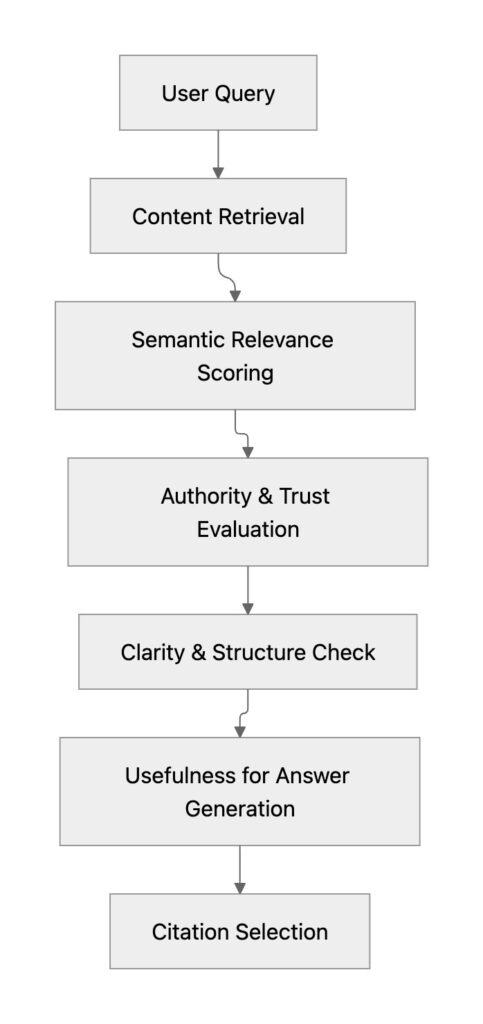

- AI Content Evaluation Flow

- Future of AI Content Evaluation

- Final Insight

What is LLMO Content Scoring?

it refers to how AI systems evaluate whether content is:

- relevant to the query

- trustworthy and credible

- semantically complete

- structured for understanding

- useful for generating answers

This evaluation determines whether your content becomes eligible for AI citation.

Unlike traditional ranking factors, it focuses on usefulness, clarity, and reliability rather than backlinks or keyword density alone.

How AI Systems Use LLMO Content Scoring

AI systems retrieve multiple sources and score them before generating responses.

Only content that passes all LLMO content scoring layers is selected for citation.

Why LLMO Content Scoring Matters for AI Search Visibility

In AI-driven search, visibility depends on whether your content:

- answers the query clearly

- demonstrates expertise

- provides trustworthy information

- is structured for machine interpretation

- helps generate useful responses

If your content lacks these signals, AI systems may ignore it.

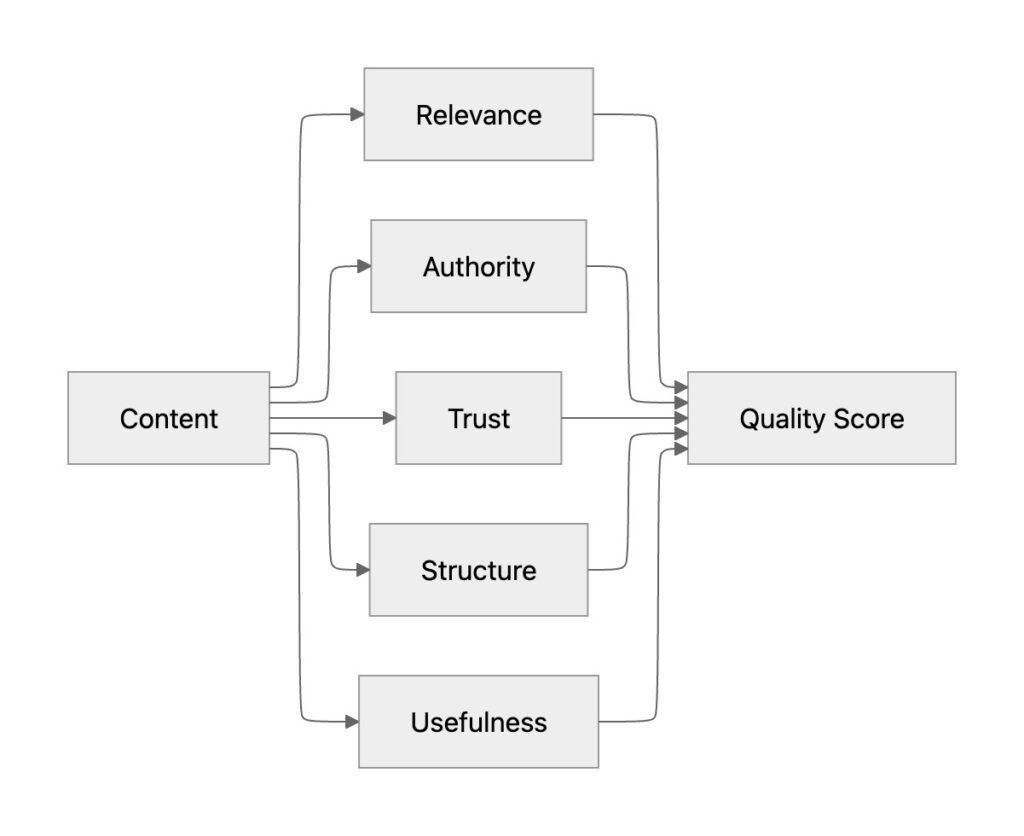

Core Factors in LLMO Content Scoring

1. Semantic Relevance

AI evaluates how well content matches the user’s intent and context.

Strong signals include:

- direct answers to questions

- complete topic coverage

- contextual clarity

- natural language explanations

Content aligned with search intent performs better in LLMO content score.

2. Topical Authority & Expertise Signals

AI systems prioritize sources demonstrating subject expertise.

Authority indicators include:

- consistent publishing in a niche

- comprehensive topic clusters

- expert insights and depth

- industry mentions and recognition

Strong authority improves LLMO content scoring and retrieval confidence.

3. Trust & Credibility Signals

AI favors reliable and verifiable information.

Trust indicators include:

- factual accuracy

- citations and references

- author expertise

- consistent brand information

- transparency and credibility

Trust signals are essential for high score.

4. Content Structure & Clarity

AI systems prefer content that is easy to interpret.

Best practices include:

- clear headings and subheadings

- concise paragraphs

- bullet points and summaries

- logical information flow

Well-structured content improves parsing and scoring.

5. Answer Utility & Practical Value

AI evaluates whether content helps generate useful answers.

Content performs better when it:

- solves a specific problem

- explains complex ideas clearly

- provides actionable insights

- includes examples and steps

Usefulness is a key driver of LLMO content score.

Simplified LLMO Content Scoring Model

Higher combined quality signals lead to better LLMO content score and increased citation potential.

Traditional SEO Ranking vs LLMO Content Scoring

| Factor | Traditional SEO | LLMO Content Scoring |

|---|---|---|

| Keywords | Important | Secondary |

| Backlinks | Major factor | Supporting signal |

| Rank position | Critical | Less important |

| Content clarity | Helpful | Essential |

| Trust signals | Helpful | Critical |

| Usefulness | Moderate | Primary factor |

This shift shows why LLMO is redefining content visibility.

Why High Rankings Don’t Guarantee AI Citations

Content may rank well but fail AI evaluation due to:

- shallow or generic information

- weak credibility signals

- poor structure

- lack of clarity

- limited usefulness

AI systems prioritize quality signals over ranking position.

Signs Your Content Has Low LLMO Content Scoring

- not cited in AI-generated answers

- competitors appear in AI responses

- content lacks depth or clarity

- inconsistent trust signals

- poor structured formatting

These indicate weak evaluation scores.

How to Improve LLMO Content Scoring

Focus on Intent Completeness

Answer the full user question.

Build Topical Authority

Create content clusters around your expertise.

Improve Credibility Signals

Add references, data, and expert insights.

Structure Content Clearly

Use headings, summaries, and logical formatting.

Provide Practical Value

Offer actionable insights and examples.

LLMO Optimization Checklist

| Optimization Area | Action | Impact |

|---|---|---|

| Intent coverage | Answer complete queries | High |

| Authority | Publish niche expertise | High |

| Trust signals | Add data & citations | High |

| Structure | Improve formatting | High |

| Clarity | Simplify explanations | Medium |

| Utility | Provide actionable insights | High |

Role of an LLM Audit in Improving LLMO Content Score

An LLM audit evaluates how AI systems interpret and score your content.

It helps identify:

- content quality gaps

- authority weaknesses

- semantic coverage issues

- trust signal deficiencies

- citation eligibility barriers

Regular audits ensure your content to meet LLMO Content evaluation standards.

Explore LLM Audit for analysing your website ai presence.

How an LLM Audit Improves Content Selection Chances

An audit strengthens visibility by:

- identifying low-quality sections

- improving semantic completeness

- strengthening trust and credibility signals

- enhancing structure and clarity

- increasing citation eligibility

This helps AI systems confidently select your content.

AI Content Evaluation Flow

Content must pass evaluation before being cited.

Future of AI Content Evaluation

AI search is evolving toward:

- trust-driven retrieval

- entity-based understanding

- context-aware answers

- usefulness-first ranking

Content optimized for LLMO content score will dominate AI search visibility.

know more about the future of Artificial Intelligence in next 10 years

Final Insight

AI systems do not select content randomly. They evaluate relevance, trust, structure, and usefulness before generating answers.

If your content meets strong LLMO content score signals, it becomes eligible for citation and retrieval.