Search is rapidly evolving from traditional search engines to AI-powered answer engines. Instead of showing a list of websites, tools like ChatGPT, Perplexity, and Google’s AI Overviews generate direct answers by synthesizing information from across the web. According to research from Semrush, a growing number of users now rely on AI assistants to discover products, services, and information.

This shift introduces a new visibility challenge: being cited inside AI-generated answers. Even if your website ranks well on Google, it may still be ignored by AI systems that prioritize structured content, authority signals, and trusted sources.

This phenomenon is known as AI citation bias — when large language models repeatedly reference a small group of websites while overlooking many other relevant sources. Businesses that want to remain discoverable in AI search must understand how these systems choose sources and how to improve their LLM audit and AI visibility strategies.

The Shift from Search Results to Synthesized Answers

Traditional search engines function as navigational tools. Users type a query and receive a list of websites that might contain the answer.

AI assistants, however, behave differently.

When someone asks a question like:

- “What is the best CRM for startups?”

- “Which AI tools should a marketing team use?”

- “How does generative engine optimization work?”

The AI system does not simply show links. Instead, it analyzes multiple sources, summarizes the information, and presents a single coherent response.

This creates a major shift in digital visibility.

Rather than competing for rankings on a results page, websites are now competing to become one of the few sources used by the AI when generating its answer.

In many cases, only three to five sources appear in an AI-generated response. Thousands of other pages that contain useful information are never referenced.

This raises an important question:

Why does AI choose certain sources while ignoring others?

The Emergence of AI Citation Bias

The answer lies in how LLMs retrieve and evaluate information.

AI assistants typically rely on a system known as retrieval-augmented generation (RAG). In this approach, the AI retrieves relevant information from external sources and then uses that information to generate a response.

However, the retrieval process is not neutral.

Several subtle factors influence which sources the model retrieves first, including:

- historical training data

- domain authority signals

- semantic similarity

- content structure

- citation patterns across the web

Over time, these factors create a feedback loop.

If an AI system frequently cites a particular source, that source becomes more likely to appear again in future responses. Meanwhile, newer or lesser-known sources may struggle to break into this cycle.

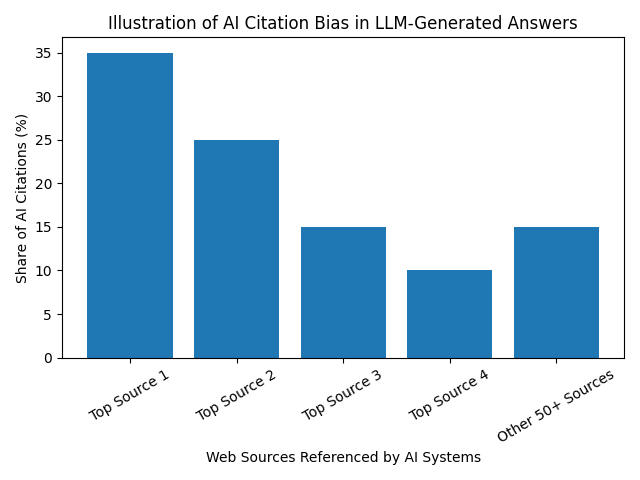

This phenomenon creates AI citation bias, where a small number of websites dominate AI-generated answers across multiple queries.

Why Citation Bias Matters for Businesses

The implications of AI citation bias are enormous.

In traditional SEO, even if a website ranked lower on the results page, users could still scroll and discover it.

In AI search, that opportunity disappears.

If an AI assistant cites only a handful of sources, any business outside that small set effectively becomes invisible to users relying on AI-generated answers.

This can affect several areas of digital growth:

Reduced Brand Discovery

If AI systems consistently reference the same few sources, emerging brands may struggle to gain visibility — even if their products are competitive.

Concentration of Authority

Over time, AI citation bias may concentrate authority among a limited number of websites, reinforcing their dominance in AI search.

Invisible Competition

Businesses may lose potential customers without ever realizing it. If AI assistants never mention a brand, users may never know the brand exists.

Understanding this hidden layer of visibility is therefore critical for companies operating in an AI-driven information environment.

The Factors That Influence AI Citations

Although the exact mechanisms behind AI citation behavior are still evolving, several patterns have begun to emerge.

These patterns reveal how LLMs decide which sources to trust and reference.

1. Entity Recognition

AI models tend to favor sources associated with well-recognized entities.

Entities include brands, organizations, authors, or topics that appear frequently across the web. If a brand has strong entity recognition — meaning it is mentioned consistently across multiple platforms — it becomes easier for AI systems to identify and trust it.

This means brand presence across multiple channels can influence AI citations.

2. Semantic Clarity

AI models prioritize content that is easy to interpret.

Content written with clear explanations, structured headings, and logical flow is more likely to be extracted by AI systems. In contrast, complex or poorly structured pages may be overlooked even if they contain valuable information.

Semantic clarity therefore plays a key role in AI visibility.

3. Knowledge Graph Connections

Large language models often rely on knowledge graphs to understand relationships between concepts.

If a website’s content aligns well with existing knowledge graph structures — such as linking related topics or entities — it becomes easier for AI systems to contextualize the information.

This increases the likelihood that the content will be used in AI-generated answers.

4. Cross-Domain Mentions

AI assistants analyze signals from across the internet.

If multiple credible websites mention a brand or reference its content, the AI system may treat that brand as a reliable source.

This creates a form of distributed authority that influences citation probability.

5. Content Extractability

One of the most overlooked factors in AI visibility is extractability.

AI systems must be able to extract concise insights from a page in order to incorporate them into an answer. Content that contains clear summaries, bullet points, definitions, and structured explanations is much easier for AI models to extract.

Pages that bury key insights inside long paragraphs may be less likely to be cited.

The “Invisible Rank” in AI Search

In traditional search engines, rankings are visible.

Users can see whether a page appears in position #1 or #10.

In AI search, however, rankings exist in a hidden form.

Before generating an answer, the AI system internally ranks potential sources based on relevance and credibility. Only the top-ranked sources are used in the final response.

This hidden ranking layer can be thought of as the “invisible rank” of AI search.

Businesses cannot see this ranking directly, but its effects determine whether their brand appears in AI answers.

Understanding this invisible ranking layer is becoming a key objective of modern LLM audits.

Detecting Citation Bias Through LLM Audits

An LLM audit helps uncover patterns in AI citation behavior.

By testing large numbers of prompts across different AI platforms, analysts can observe which sources appear repeatedly and which are absent.

This analysis reveals several important insights:

- which competitors dominate AI answers

- which topics trigger specific citations

- how often a brand is mentioned

- which prompts exclude the brand entirely

These findings allow organizations to identify gaps in their AI visibility strategy.

Strategies to Overcome AI Citation Bias

Although citation bias exists, businesses are not powerless.

Several strategies can increase the likelihood of being referenced by AI assistants.

Build Strong Entity Presence

Consistent brand mentions across the web strengthen entity recognition.

Publishing research, contributing to industry discussions, and appearing in authoritative publications can improve this presence.

Create AI-Friendly Content

Content designed for AI extraction performs better in generative search environments.

This includes:

- structured headings

- concise explanations

- lists and bullet points

- FAQ sections

- clear definitions

These formats make it easier for AI models to extract and summarize information.

Develop Topical Authority

AI models prefer sources that demonstrate deep expertise in a particular domain.

Publishing multiple high-quality articles around a specific topic cluster increases the likelihood that AI systems will recognize a website as an authority.

Expand Cross-Platform Mentions

Mentions on trusted platforms — including industry blogs, research publications, and media outlets — reinforce credibility signals.

These signals may influence AI systems when selecting sources.

The Future of AI Discovery

As AI assistants become more integrated into everyday life, the way people discover information will continue to evolve.

Instead of browsing dozens of websites, users will increasingly rely on AI-generated summaries to guide decisions.

This transformation will reshape the digital visibility landscape.

Businesses will no longer compete solely for search rankings. They will compete for inclusion inside AI-generated knowledge.

In this new environment, understanding AI citation behavior will be essential.

Final Thoughts

The rise of AI search has introduced a new and largely invisible layer of digital visibility.

AI citation bias determines which brands are mentioned in AI-generated answers and which remain unseen. Unlike traditional search rankings, this system operates inside the reasoning processes of language models, making it difficult to analyze without specialized tools.

For businesses navigating this new landscape, understanding the hidden ranking layer of AI search is critical.

An LLM audit provides the insights needed to detect citation patterns, identify visibility gaps, and improve the likelihood that a brand will be referenced by AI assistants.

As generative AI becomes the primary interface between users and information, one question will define the future of digital marketing:

Is your brand part of the AI’s knowledge — or invisible to it?